Vision-language-action models, commonly referred to as VLA models, are artificial intelligence frameworks that merge three fundamental abilities: visual interpretation, comprehension of natural language, and execution of physical actions. In contrast to conventional robotic controllers driven by fixed rules or limited sensory data, VLA models process visual inputs, grasp spoken or written instructions, and determine actions on the fly. This threefold synergy enables robots to function within dynamic, human-oriented settings where unpredictability and variation are constant.

At a broad perspective, these models link visual inputs from cameras to higher-level understanding and corresponding motor actions, enabling a robot to look at a messy table, interpret a spoken command like pick up the red mug next to the laptop, and carry out the task even if it has never seen that specific arrangement before.

Why Traditional Robotic Systems Fall Short

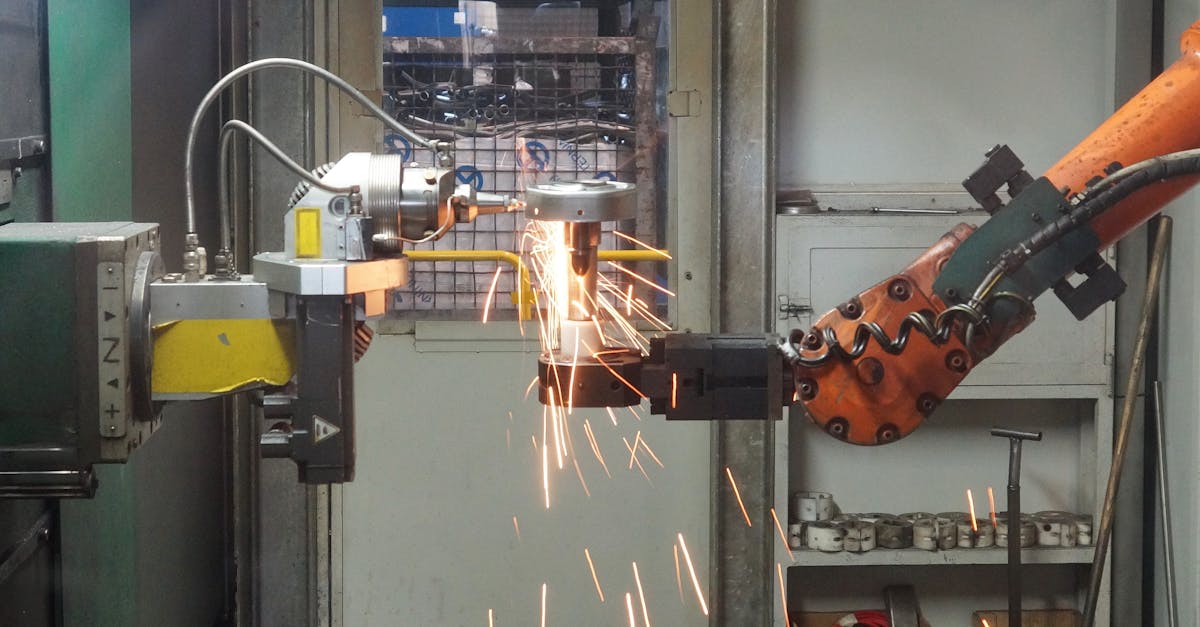

Conventional robots perform remarkably well in tightly controlled settings such as factories, where lighting, object placement, and daily tasks remain largely consistent, yet they falter in environments like homes, hospitals, warehouses, and public areas. Their shortcomings often arise from compartmentalized subsystems: vision components tasked with spotting objects, language modules that interpret instructions, and control units that direct actuators, all operating with only a limited shared grasp of the surroundings.

Such fragmentation results in several issues:

- High engineering costs to define every possible scenario.

- Poor generalization to new objects or layouts.

- Limited ability to interpret ambiguous or incomplete instructions.

- Fragile behavior when the environment changes.

VLA models address these issues by learning shared representations across perception, language, and action, enabling robots to adapt rather than rely on rigid scripts.

How Visual Perception Shapes Our Sense of Reality

Vision gives robots a sense of contextual awareness, as contemporary VLA models rely on expansive visual encoders trained on billions of images and videos, enabling machines to identify objects, assess spatial relations, and interpret scenes with semantic understanding.

A hospital service robot, for instance, can visually tell medical devices, patients, and staff uniforms apart, and rather than just spotting outlines, it interprets the scene: which objects can be moved, which zones are off‑limits, and which elements matter for the task at hand, an understanding of visual reality that underpins safe and efficient performance.

Language as a Flexible Interface

Language transforms how humans interact with robots. Rather than relying on specialized programming or control panels, people can use natural instructions. VLA models link words and phrases directly to visual concepts and motor behaviors.

This has several advantages:

- Non-expert users can instruct robots without training.

- Commands can be abstract, high-level, or conditional.

- Robots can ask clarifying questions when instructions are ambiguous.

For instance, in a warehouse setting, a supervisor can say, reorganize the shelves so heavy items are on the bottom. The robot interprets this goal, visually assesses shelf contents, and plans a sequence of actions without explicit step-by-step guidance.

Action: Moving from Insight to Implementation

The action component is the stage where intelligence takes on a practical form, with VLA models translating observed conditions and verbal objectives into motor directives like grasping, moving through environments, or handling tools, and these actions are not fixed in advance but are instead continually refined in response to ongoing visual input.

This feedback loop enables robots to bounce back from mistakes, as they can tighten their hold when an item starts to slip and redirect their movement whenever an obstacle emerges. Research in robotics indicates that systems built with integrated perception‑action models boost task completion rates by more than 30 percent compared to modular pipelines operating in unpredictable settings.

Learning from Large-Scale, Multimodal Data

One reason VLA models are advancing rapidly is access to large, diverse datasets that combine images, videos, text, and demonstrations. Robots can learn from:

- Human demonstrations captured on video.

- Simulated environments with millions of task variations.

- Paired visual and textual data describing actions.

This data-driven approach allows next-gen robots to generalize skills. A robot trained to open doors in simulation can transfer that knowledge to different door types in the real world, even if the handles and surroundings vary significantly.

Real-World Use Cases Emerging Today

VLA models are already influencing real-world applications, as robots in logistics now use them to manage mixed-item picking by recognizing products through their visual features and textual labels, while domestic robotics prototypes can respond to spoken instructions for household tasks, cleaning designated spots or retrieving items for elderly users.

In industrial inspection, mobile robots apply vision systems to spot irregularities, rely on language understanding to clarify inspection objectives, and carry out precise movements to align sensors correctly, while early implementations indicate that manual inspection efforts can drop by as much as 40 percent, revealing clear economic benefits.

Safety, Adaptability, and Human Alignment

A further key benefit of vision-language-action models lies in their enhanced safety and clearer alignment with human intent, as robots that grasp both visual context and human meaning tend to avoid unintended or harmful actions.

For example, if a human says do not touch that while pointing to an object, the robot can associate the visual reference with the linguistic constraint and modify its behavior. This kind of grounded understanding is essential for robots operating alongside people in shared spaces.

Why VLA Models Define the Next Generation of Robotics

Next-gen robots are expected to be adaptable helpers rather than specialized machines. Vision-language-action models provide the cognitive foundation for this shift. They allow robots to learn continuously, communicate naturally, and act robustly in the physical world.

The significance of these models goes beyond technical performance. They reshape how humans collaborate with machines, lowering barriers to use and expanding the range of tasks robots can perform. As perception, language, and action become increasingly unified, robots move closer to being general-purpose partners that understand our environments, our words, and our goals as part of a single, coherent intelligence.